Since the ChatGPT boom, there is a growing fear that it will replace human labor. Indeed, technology has displaced workers in the past—we certainly don’t see many people in charge of operating elevators nowadays. But the proliferation of dramatic news articles predicting job losses has made me think, and I don’t fully buy into their predictions. In this article I’ll share some thoughts about those predictions and I’ll tell you which jobs I do think are most at risk of an AI takeover.

What does the job really entail?

The professions that involve content creation may be the ones most often said to be at risk of an AI takeover. These include copywriting, fiction writing, non-fiction writing, journalistic writing, graphic design, and translation, to name a few. But there’s something that boggles me about these predictions: Commentators seem not to take into account what doing those jobs truly entails.

Writing professions, for example, have very little to do with the act of writing (putting words together), which is what ChatGPT does. Consider the work of a copywriter. The big bulk of the job involves understanding the customer and finding an interesting angle to promote the product. This is no easy task—the copywriter cannot be too dull, as the ad must stand out, but they cannot be too flashy either, as the product’s selling points may become unclear.

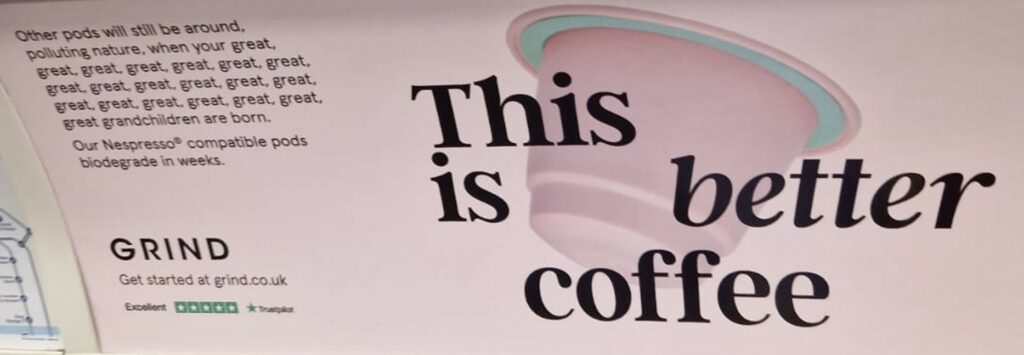

Consider this example of excellent copywriting, which I saw on the London Underground:

The ad manages to clearly convey the product’s selling point (the capsules are biodegradable) in an ingenious way (the “great great great…” part). When the consumer finishes reading the ad, they have no doubts about what the product is and why it’s beneficial, even though it’s explained in an unconventional way. Also, the copywriters managed to gracefully sneak in the answer to the most common question asked by customers—whether the capsules are compatible with Nespresso machines. The headline of the ad, “This is better coffee,” was written to serve a sales purpose: to perform audience selection (coffee drinkers) in a clear-cut way. Behind this ad there were probably hours of discussion and many different proposals.

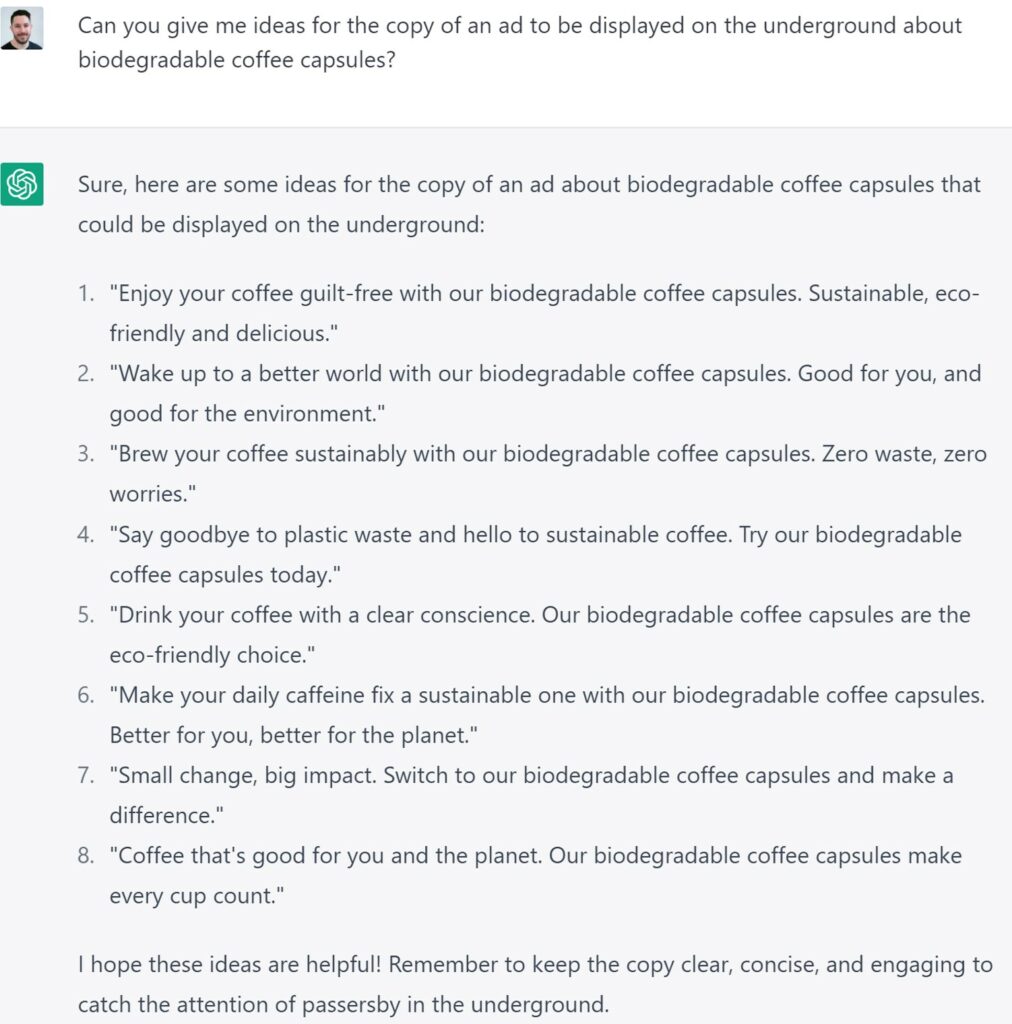

I asked ChatGPT to generate some ad ideas for this product. Here are the results:

They all make sense and are correct, but none of the suggestions is outstanding in the senses we’ve just discussed. And it won’t mention Nespresso compatibility unless prompted.

Let’s consider another example: writing non-fiction books. People are saying AI will write books and even threaten writers’ jobs. There’s even a dubious book on Amazon (mostly written with ChatGPT) telling people how to use ChatGPT to write non-fiction books.

But this misses the fact that writing the text itself is the easiest part of writing a non-fiction book. For example, when I wrote my book about AI, the most difficult part of the job was to find the right angle that would resonate with the audience. After I finished the first draft, I sent it out to dozens of beta readers from my intended audience who gave me their opinion of the book. It turns out that the tone and angle were sometimes inadequate. For example, they didn’t like that I said I’d tell “the truth” about something a few times, as this audience preferred to be presented with the facts and decide for themselves. Some mentioned they didn’t like the introduction because it didn’t tell any stories they could learn something from. They also suggested expanding some sections and shortening others. I updated the manuscript substantially and did more rounds of beta reading with new people until the book was widely accepted. This lengthy process is followed by most writers.

While ChatGPT could have helped me write some paragraphs once I knew what to say, it couldn’t have helped me decide what to say, what not to say, and which tone to use. But that was the most time-consuming part of the job, not writing sentences and paragraphs.

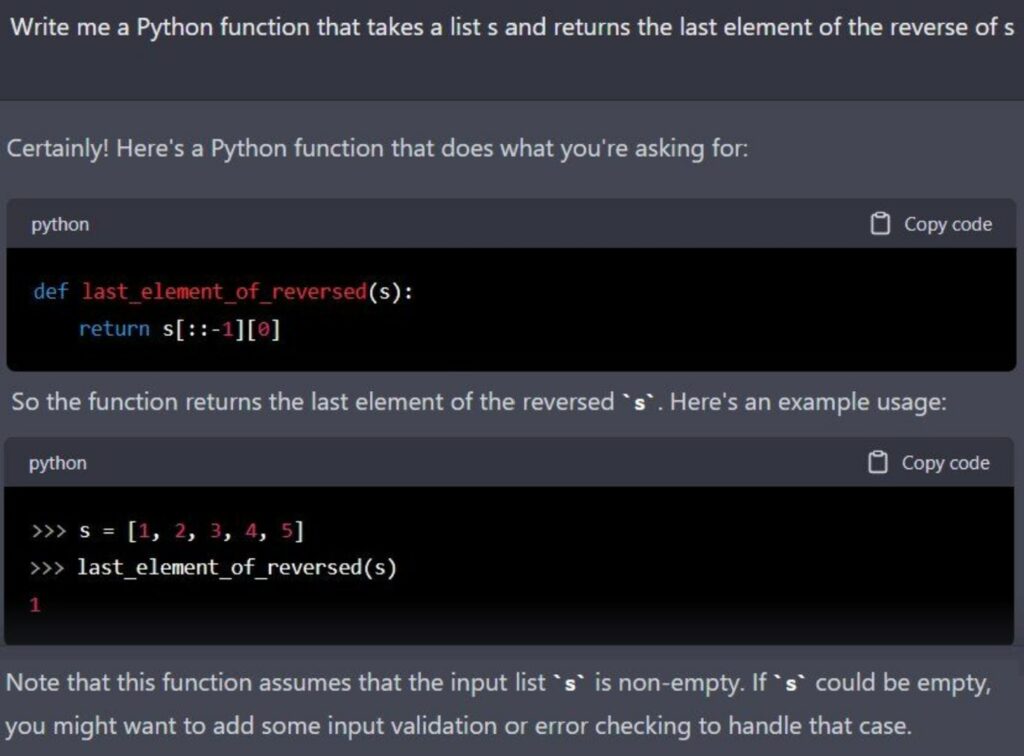

Another example of a job said to be replaced by AI is software engineering. ChatGPT can indeed write snippets of code given a prompt:

These formulaic coding tasks are common in job interviews. However, once again, this is not what the real job is about. The big bulk of the software engineering job involves higher-level tasks, like understanding what the client wants, architecting a system, picking the best tools and even advising the client on which features to develop first because they’d provide a higher return on the effort made.

ChatGPT may be able to write some useful snippets of code, but what about all the rest? (By the way, the example shown above is incorrect—ChatGPT produced buggy code, as it often does.)

Finally, let’s discuss the job of doctors, which have been in the spotlight after ChatGPT passed the U.S. Medical Licensing Exam. An ER doctor tried to use ChatGPT for medical diagnosis and was disappointed. He said, “ChatGPT worked pretty well as a diagnostic tool when I fed it perfect information and the patient had a classic presentation. (…) Most actual patient cases are not classic.” He then went on to tell the story of how ChatGPT misdiagnosed a patient who was pregnant but didn’t know she was pregnant, which happens more often than one would think in a real-life scenario. As it turns out, being an ER doctor involves many more skills than the ones assessed in the licensing exam.

So, before we jump to conclusions and say a certain profession will be replaced by AI, we should thoroughly understand what the job entails. We cannot say AI will replace, say, copywriters without understanding what a copywriter really does. We cannot say AI will diagnose ER patients without knowing what goes on in the emergency room. It appears to be that, when we take time to understand what it really takes to do the job, we see that a lot of the professions that seem to be at risk aren’t so.

That being said, not all jobs are safe…

Good enough vs excellent work

There is a sizable “good enough” work market. Consider the task of writing blog articles to drive traffic to a website. I’m sure you’ve seen those articles before; they often have titles like, “10 Things to See in Paris in 2023” or “Which Video Editing Software to Buy in 2023.” The owners of these websites just want to increase traffic in order to sell products and services, and don’t necessarily prioritize writing quality. So, they often hire cheap labor to produce a lot of “good enough” content, packed with keywords for search engines to pick up.

Here’s a screenshot of people offering such writing services on Fiverr:

The resulting articles, written for around 10 dollars each, tend not to be particularly good in terms of writing quality, but they do the job of attracting people to websites.

Something similar happens in the design sector. If you hire a high-caliber logo designer, for instance, they will spend significant time investigating the branding of other companies. They will then design a logo that fits nicely within your industry so that it’s relatable to customers yet differentiates itself from competitors. But if what you want is just a logo, you can save a lot of money by hiring a good enough designer for a fraction of the cost; it will look nice but won’t be as effective to position your brand as a carefully researched logo.

So, instead of eliminating entire professions, I think AI may severely affect the tier of good enough work within each profession. After all, who would want to wait for a human worker to write an okay-ish article about Paris when it can be generated immediately with AI and for less money? Or who would want to hire a “good enough” logo designer when they can just use Midjourney? In my opinion, the market of good enough work is the one that should be most worried about the impact of AI. They should brace themselves for impact.

The market of excellent work, however, is much safer. After all, companies have been paying a premium for outstanding services for years when they could have probably chosen cheaper, good enough options.

If you want to future proof your career, maybe make sure you focus on high-end, excellent work. Make sure your job involves a lot of “human” tasks, like picking story angles, persuading, researching, architecting, strategizing, selling, which AI can’t do well and it doesn’t seem like it will anytime soon. But you might need to work extra hard to communicate to clients why the human touch is important and why there’s a quality gap between your work and AI’s, as a lot of people seem to think AI can do better work than it actually does. I heard a literary translator explain to others that, compared to ChatGPT, he translates what the text does, not what the text says.